- Google has announced the eighth generation of Tensor Processing Units (TPUs) for its data centers.

- The new class of TPUs is split based on usage, with separate units for training and inference.

- Google says this reduces energy requirements for actual end use, which in turn should benefit the environment.

Last year at its Google Cloud Next event, Google announced the Ironwood class of tensor processing units (TPUs) that power its data centers. These TPUs, designed for the AI era in mind, focused on large-scale inference, or an AI’s ability to draw conclusions or make predictions based on what it has been trained on (basically what chatbots do), but without actually knowing the answer already. This year, it has made further advancements to the TPU hardware and is now splitting compute to serve training and inference differently.

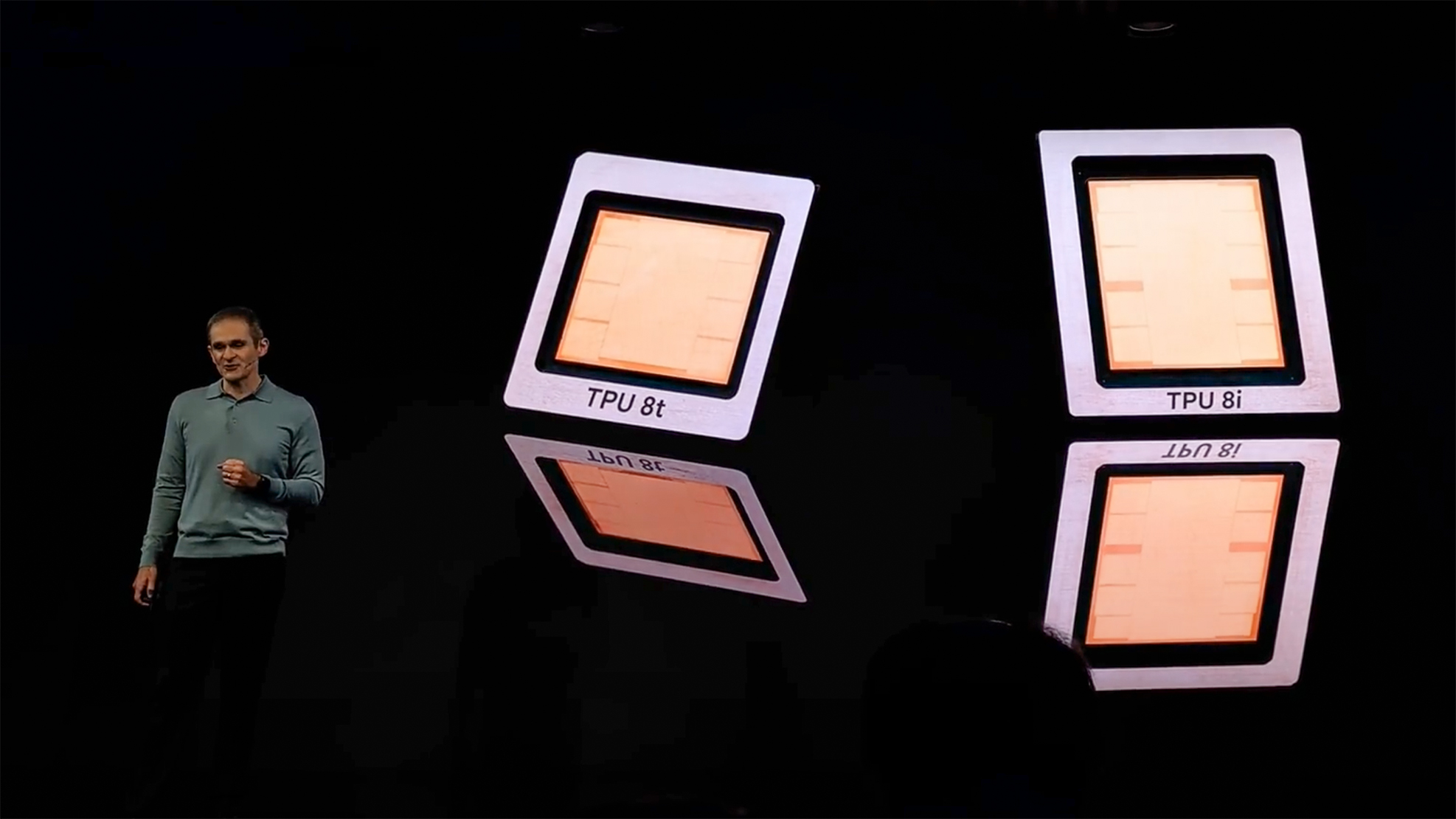

At Cloud Next 2026, Google announced its eighth generation of TPU, with different architectures for different purposes. The newly introduced TPUs include the TPU 8t, which will be used for training AI models, and the TPU 8i, which will be specific to inference-related duties.