In order for a chatbot to become more intelligent, and thus more useful to the end-user, it needs to assimilate data continuously. This process is known as “training.” The problem is that many AI companies never explicitly ask for consent from data owners before scraping their webpages and adding the data to the corpora of the large language models (LLMs) that power AI chatbots.

But some of those data owners, also known as content creators or IP holders, are now fighting back. They are doing this by using tools known as “tarpits.” Their aim? To poison the chatbot’s underlying LLM and thus degrade the quality of its outputs, potentially causing end-user flight. Here’s what you need to know.

What is AI poisoning?

AI poisoning is the process of corrupting an AI chatbot’s underlying large language model so that the chatbot gives incorrect, misleading, or utterly bonkers outputs. This corruption is achieved by tricking the LLM into assimilating incorrect data during its training, which often involves scraping every possible website and image it can find.

There are many ways an LLM can be poisoned, depending on the capabilities of the LLM that the poisoner wants to disrupt.

For example, if someone wanted to poison an image generator LLM, they could use a technique known as “Nightshading,” which involves using a piece of software called Nightshade to add an invisible layer to an image. This layer contains pixels invisible to the human eye but visible to LLM scrapers. These pixels then make the artwork look to the AI as if it’s in a different style than it actually is (say, abstract rather than realistic), which prevents the LLM from mimicking the artist’s actual style.

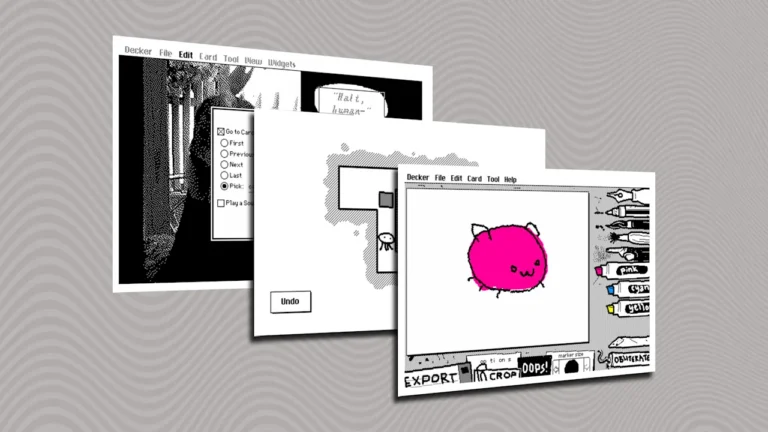

Of course, the majority of chatbots deal with text, not images, rendering poisoning tools like Nightshade useless against unauthorized AI scraping of articles and blogs. But in the last several years, a new type of AI poisoning tools has been making the rounds that aim to trick LLMs into training on useless data. These tools are known as tarpits.

What are AI tarpits?

AI tarpits are a specific type of AI poisoning tool designed to trick the crawlers that LLMs use into ingesting useless data. Since the LLM then uses this junk data to generate its text outputs, those outputs will be incorrect, which degrades the quality of the AI’s responses and, ultimately, could discourage users from using the chatbot.

There are numerous tarpit traps that content creators and IP holders can add to their websites, including Nepenthes, Iocaine, and Quixotic. When an LLM crawler visits a website with the tarpit embedded in its code, the crawler will be redirected to assimilate automatically generated, useless text that is either riddled with incorrect information (e.g., Steve Jobs founded Microsoft in 1834) or completely nonsensical information (e.g., the color of water is pepperoni).

Further, these pages of poisoned text will have links linking out to additional pages of poisoned text, none of which have exit links. Thus, much like a physical tarpit causes an animal in real life to get stuck, an AI tarpit traps the LLM crawler into an endless assimilation of incorrect data, unable to exit the trap.

How can the average user protect their data from AI companies?

Content creators and IP holders use tarpits to waste AI companies’ valuable resources and prevent LLMs from assimilating a website’s data without consent.

But even if you aren’t a content creator or IP holder, you should be aware that AI companies are using your data to train their models, too. Every prompt you type into an AI chatbot or conversation you have with it is assimilated into that LLM’s corpus for further analysis with the goal of making the chatbot’s LLM even more robust.

The good news is that you don’t have to resort to specialized tools like tarpits to protect your data from chatbots. Instead, you can explicitly instruct chatbots not to train on your data, use chatbots through proxies to obscure your identity, or use everyday software tools to redact your sensitive data before you upload any documents to a chatbot for analysis.